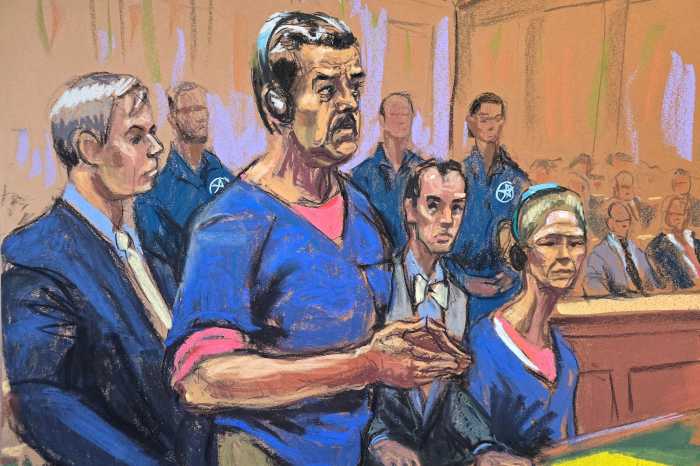

Video evidence in a child abuse case obtained through a third-party hacker accused of trading child pornography did not hold up at the state Court of Appeals as reliable enough to be used in a family court proceeding.

As a result, the appellate court dismissed a lower court’s ruling that relied on the video evidence to find a mother in the Buffalo region failed to protect her kids from her boyfriend’s sexual abuse. While five out of the seven judges of the state high court agreed with the ruling, two members railed against what they said is an impossible standard to set in regards to fending off “deepfake” videos.

The case rested on video evidence the FBI recovered from the computer of a man in Syracuse who was being investigated for child pornography. The man, referred to by initials B.W., claimed he had “hacked” into a security camera feed in the family’s home from across the state years prior, and happened to witness an instance where the mother’s boyfriend sexually assaulted her 14-year-old daughter.

The family court admitted the videos, finding them reliable based on a federal investigator’s testimony and “technical experience and expertise.” During interviews with a children’s advocate the daughter denied that she had any sexual contact with boyfriend. The Court of Appeals reversed the decision, holding the videos were inadmissible because the prosecution failed to sufficiently prove their reliability, and that without them, there was no evidence.

“Here, the confluence of factors — including the bizarre circumstances surrounding the discovery of the videos and the long time period between their creation and their recovery — raise doubts about their authenticity, and the agency simply failed to carry its burden to dispel those doubts,” Chief Judge Rowan Wilson wrote on behalf of the court majority.

The court raised concerns that FBI Agent Martin Baranski was not an expert at identifying signs of tampering or video alteration. His simple “no” when asked if he saw signs of tampering was not a sufficient level of proof, they argued. The judges referred to the precedent set by People v. Patterson, a case that found a video must be authenticated by a witness to the event, an equipment operator or expert testimony proving the video “truly and accurately” represents what was before the camera.

Wilson wrote that the case raises bigger questions about video authentication that the courts need to be wary of as AI and “deepfake” technology makes it easier to create manufactured footage that incorporates real life details. Courts need to be more rigorous about authenticating videos, the majority concluded.

Associate Judges Shirley Troutman and Madeline Singas issued separate dissents. Troutman argued that by trying to speculatively defend against new deepfake technology “the majority creates new and perhaps insurmountable hurdles for future authentication of video evidence.”

State police investigator Gary Mahoney testified that the living room he saw when he visited the house matched the living room in the video and he found sex toys that also matched those depicted in the videos. The judges argued that matching evidence, in combination with Baranski’s testimony, should have been sufficient for family court to conclude that the videos were admissible.

Singas raised the issue that, at the time that the incident occurred, AI technology was not capable of creating photorealistic deepfake videos. She questioned what type of expert testimony the court would need to produce to be able to affirmatively rule out a deepfake in the future.

“Deepfake and other emerging technologies raise thorny evidentiary challenges that our courts will need to grapple with, thoughtfully and with nuance, in cases where they are actually presented,” Singas wrote in her dissent. “The majority’s naïve analysis — essentially, saying the word “deepfake,” throwing up its hands without critical thought, and returning an abused child to an abuser’s care — cannot be the way forward.”